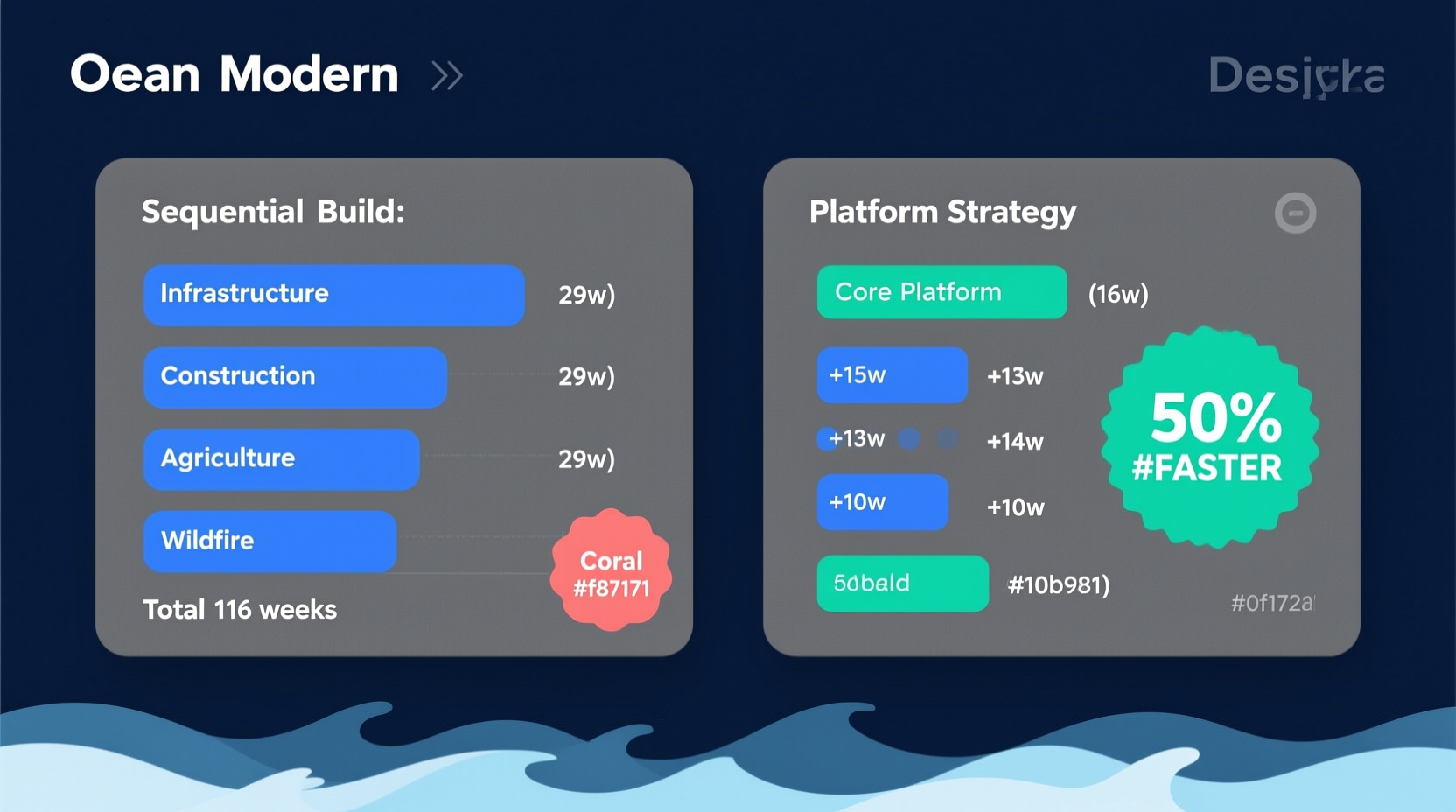

The Big Idea: 80% Overlap = 4x Market Leverage

The Core Insight

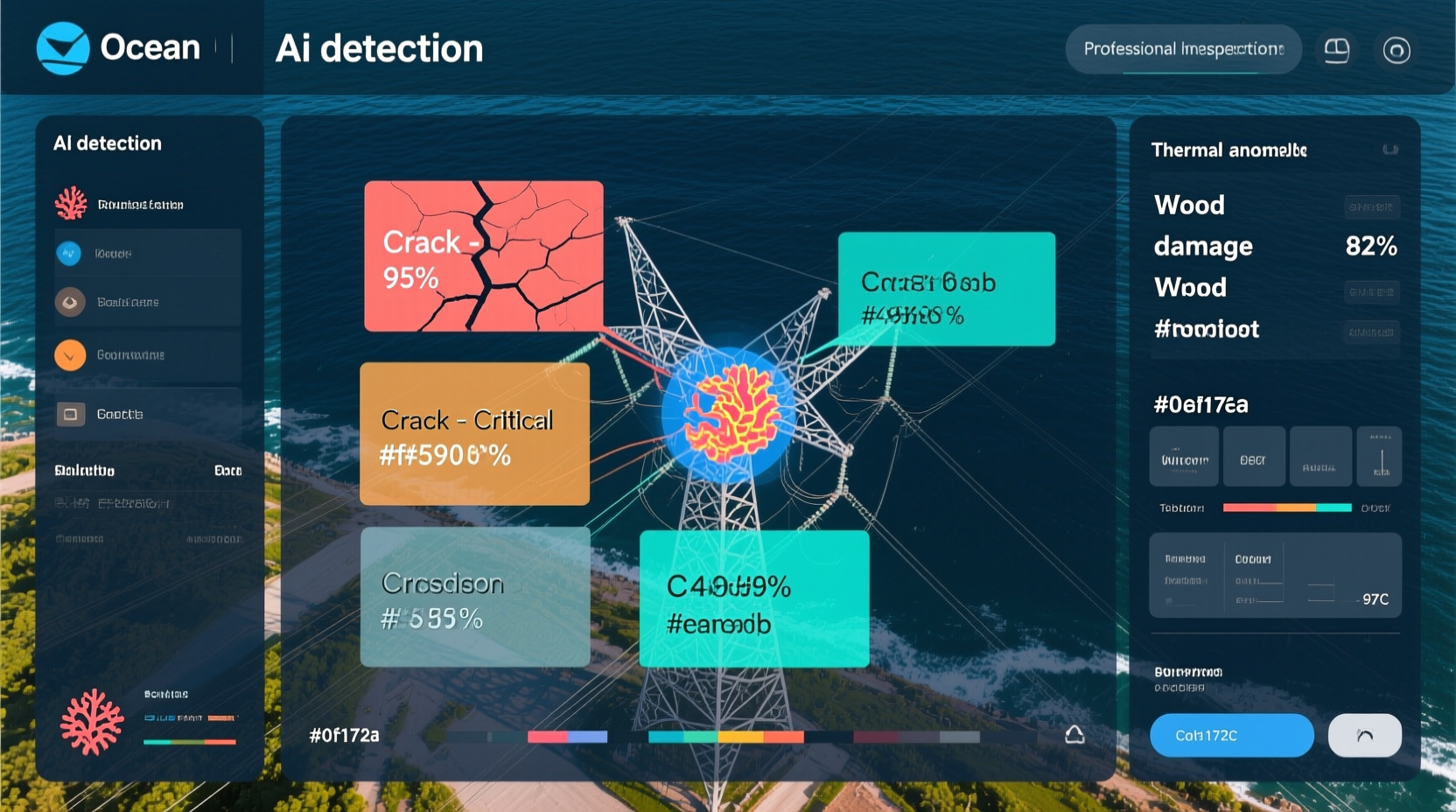

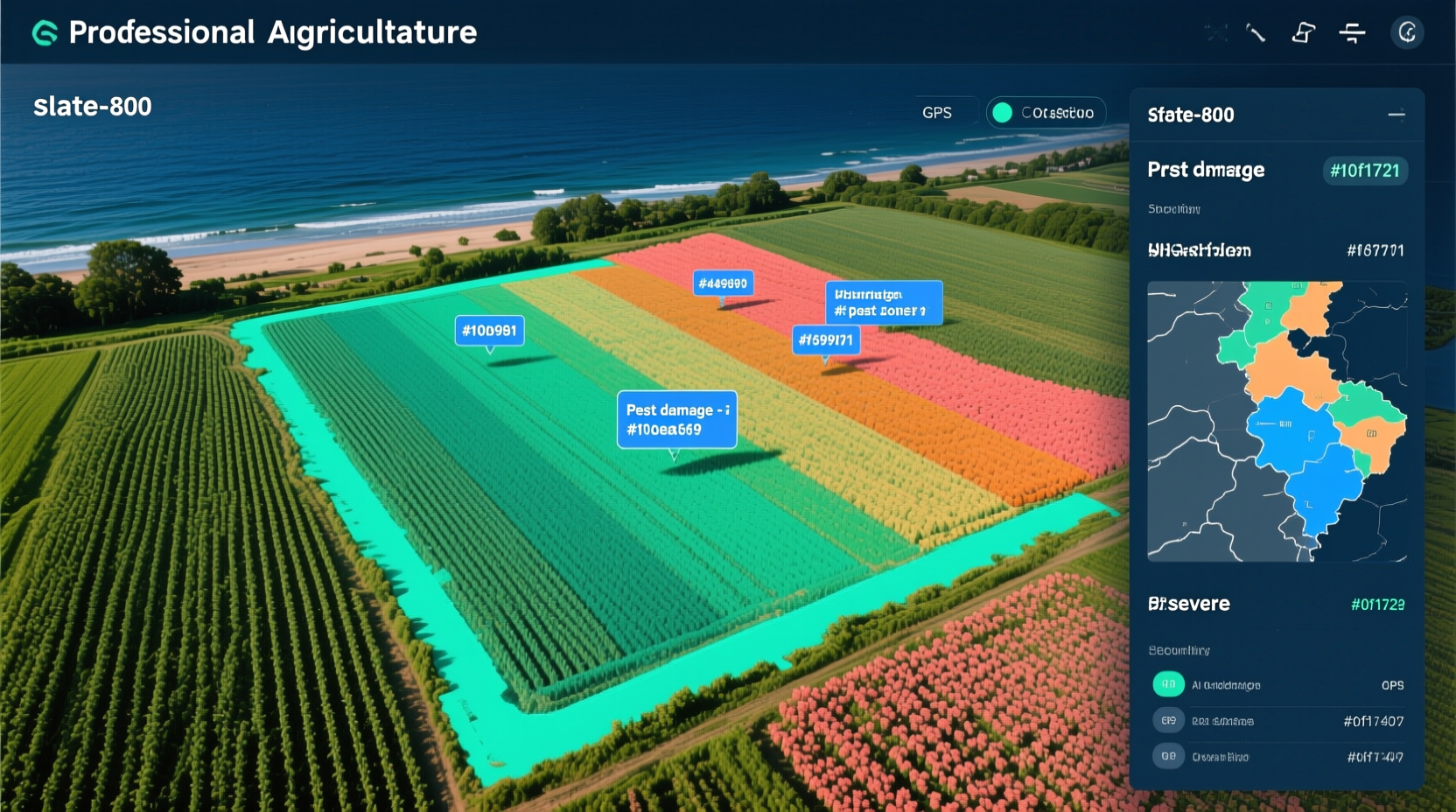

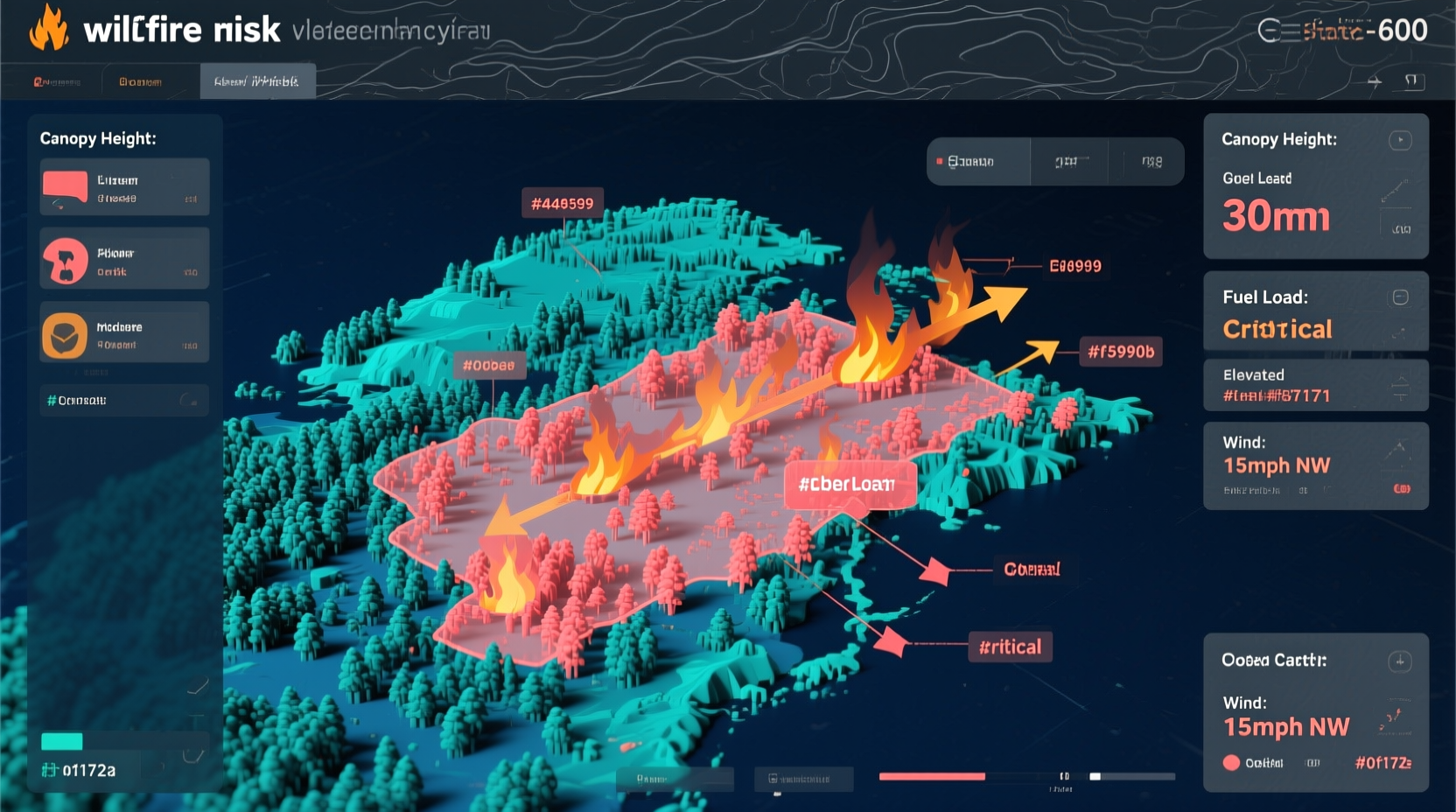

Here's the breakthrough: infrastructure inspection, construction progress tracking, agricultural monitoring, and wildfire assessment all need the exact same foundational capabilities. They all need to:

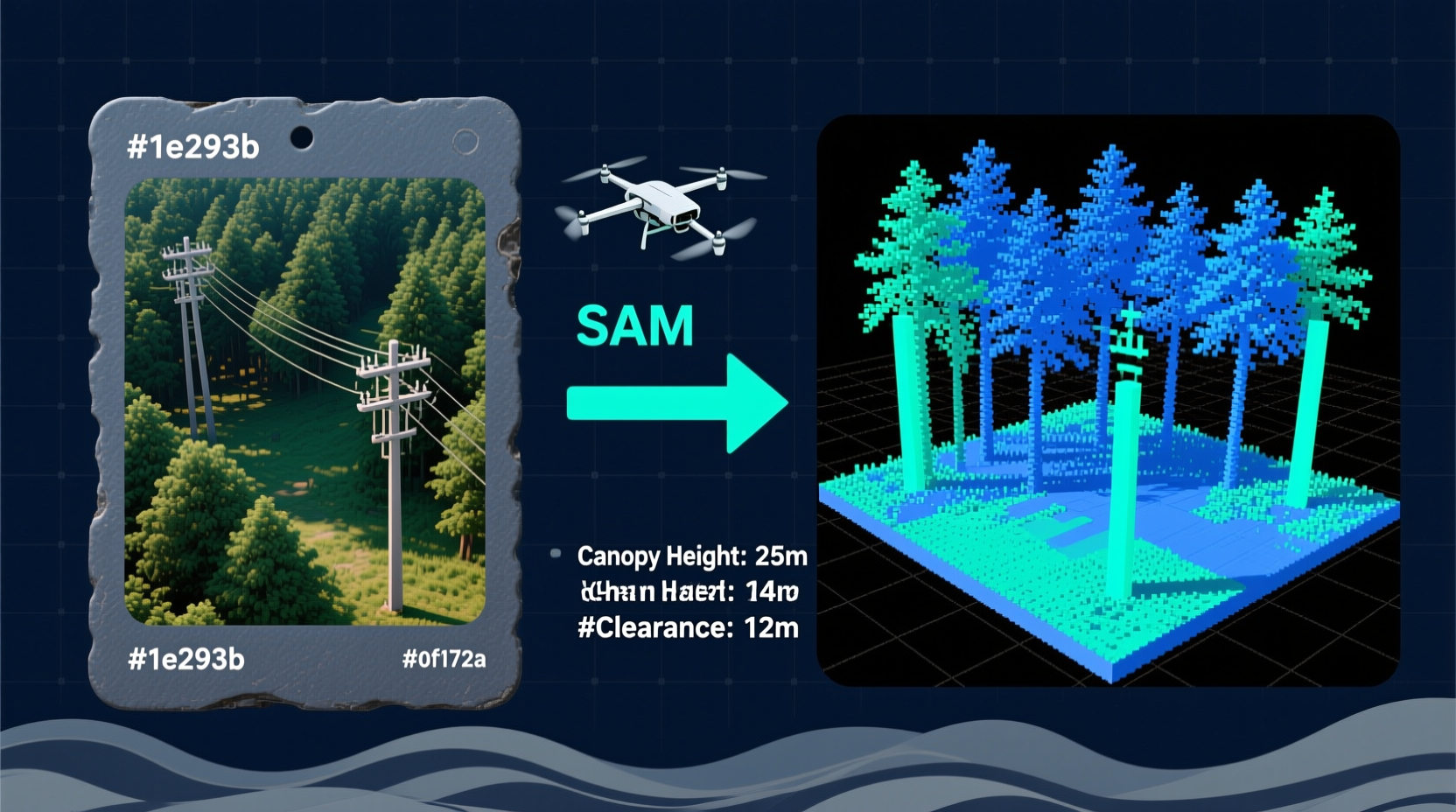

- Convert drone imagesAerial photographs captured by UAVs using visible light, thermal, or multispectral cameras into georeferencedAssigning real-world geographic coordinates to image pixels so they can be placed accurately on a map maps

- Detect changes over time (temporal analysisComparing images taken at different times to identify what has changed comparison)

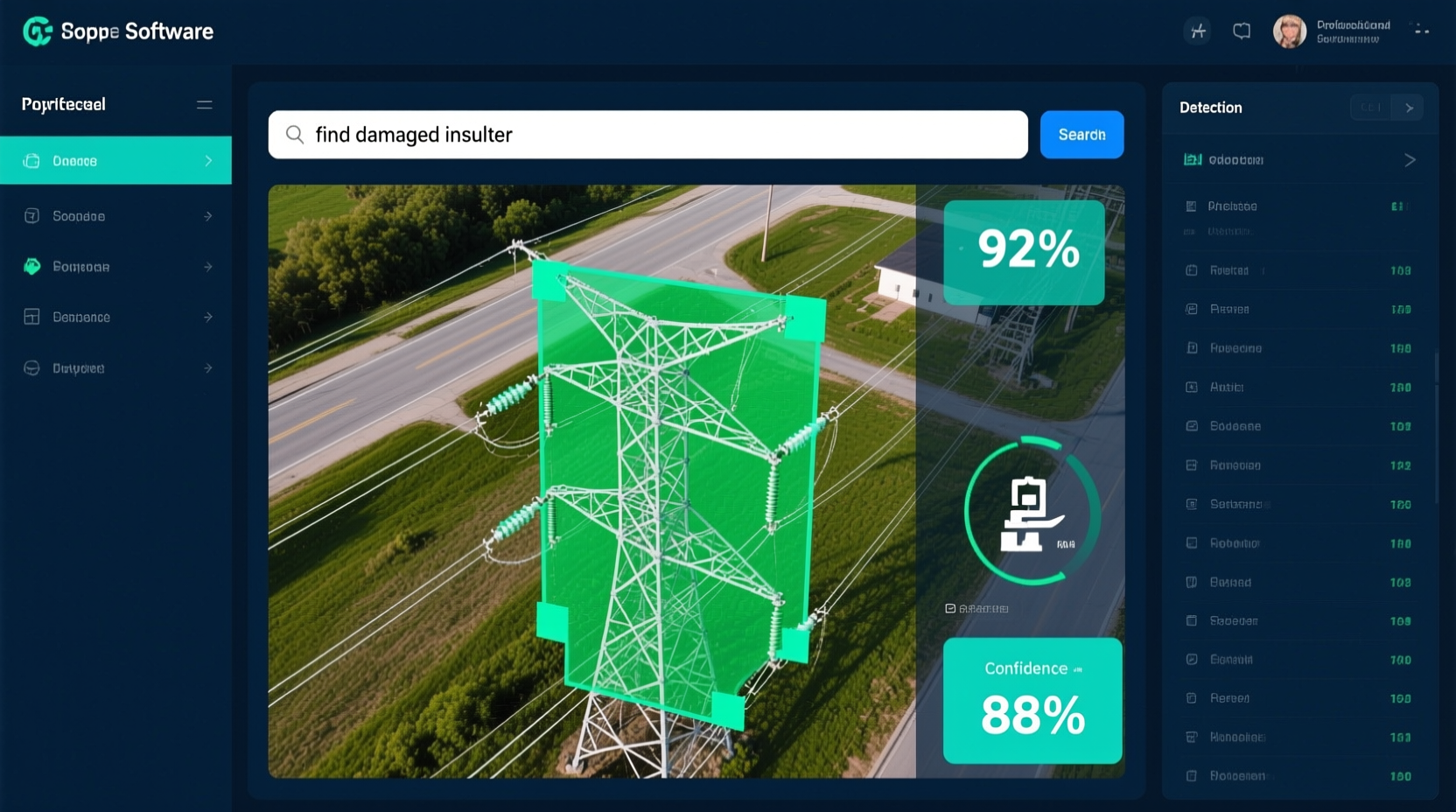

- Annotate objects with bounding boxesRectangular regions drawn around objects of interest and segmentation masksPixel-precise outlines where every pixel is labeled as belonging to the object or background

- Generate PDF reports with measurements

- Integrate with enterprise systemsLarge-scale business software like Maximo, Procore, SAP via APIs

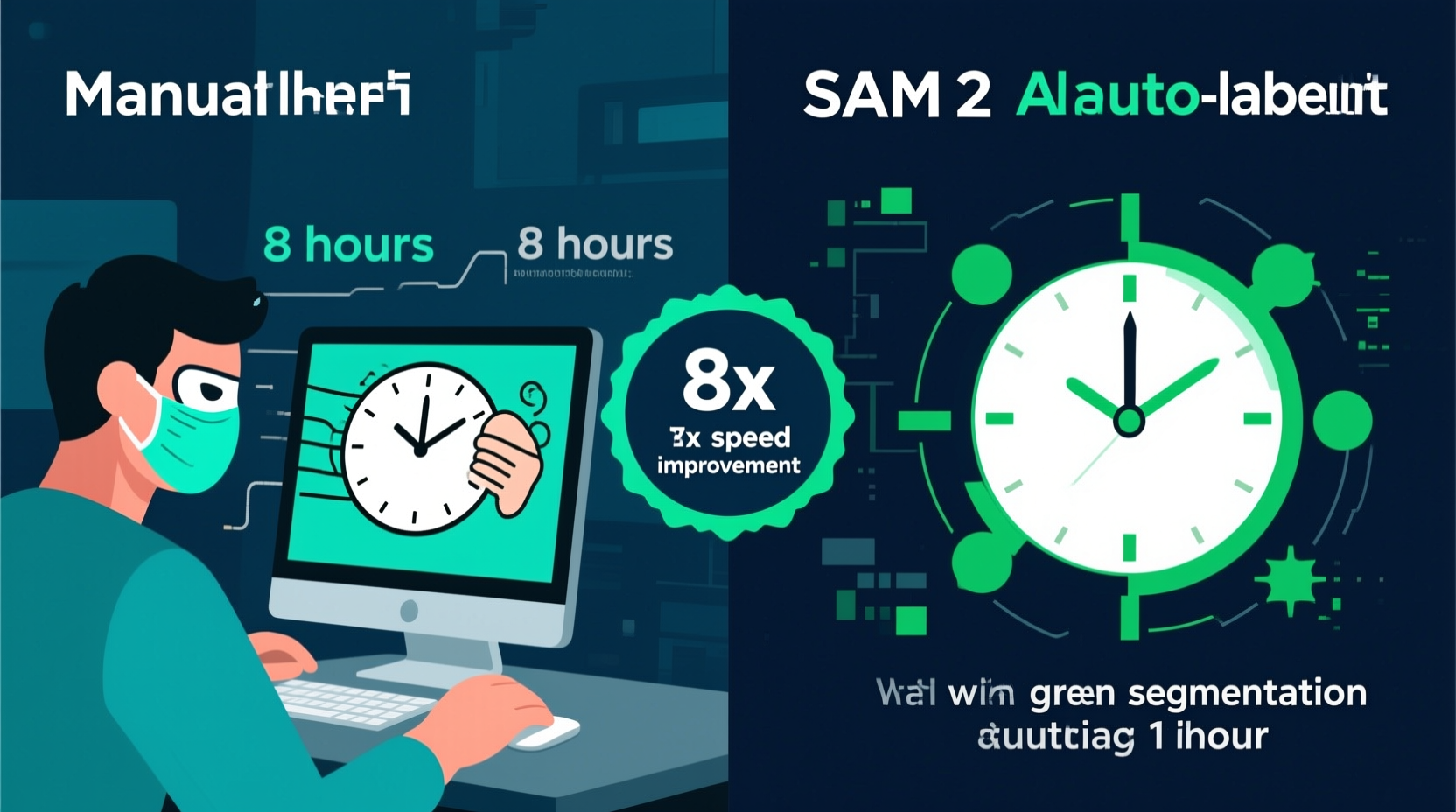

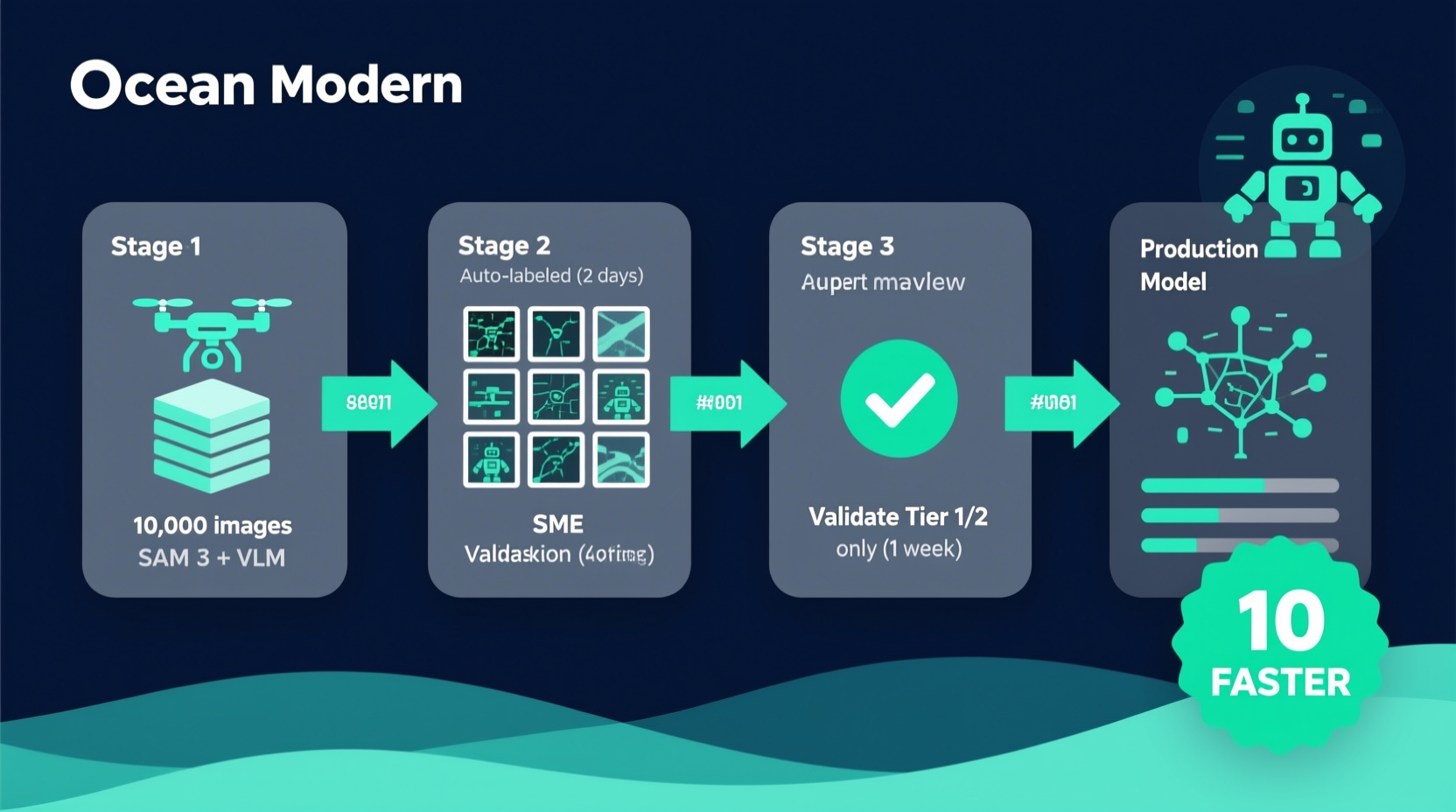

- Manage training data and improve ML modelsMachine Learning models—neural networks trained to recognize patterns continuously

The only things that differ are: what specific objects we're detecting and which enterprise systems we integrate with.

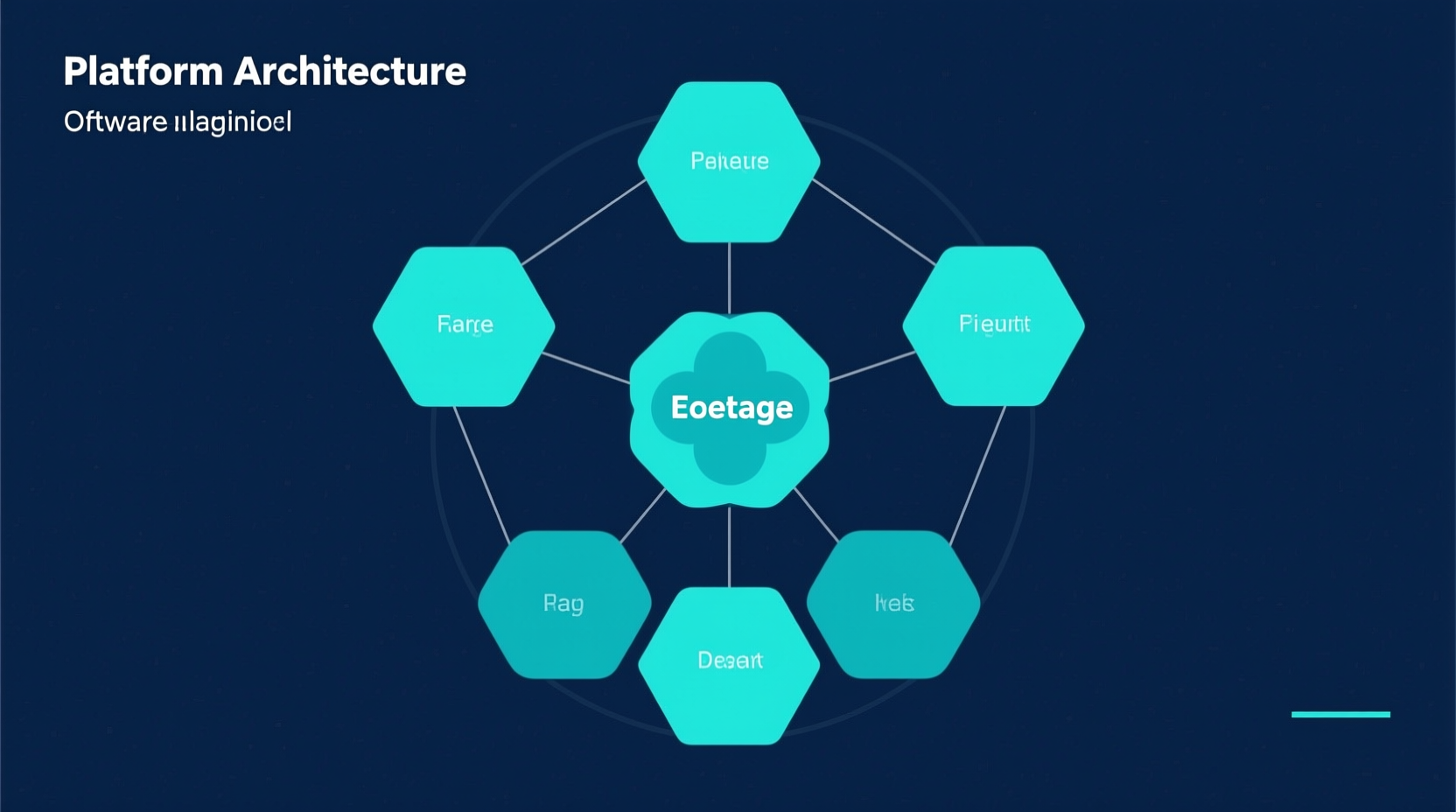

Platform Component Overlap Across Verticals

Image Processing

Bulk upload, quality scoringAutomated assessment of image sharpness, exposure, and coverage to flag unusable captures, EXIF extractionReading embedded camera metadata: GPS coordinates, altitude, timestamp, camera settings—identical for all verticals

Georeferencing

OrthomosaicGeometrically corrected aerial image composite where all pixels are at the same scale stitching, UTMUniversal Transverse Mercator—a standard coordinate system for mapping coordinate systems—near-identical

Change Detection

Temporal overlayAligning images from different dates to compare what changed, diff highlighting—same algorithms, different thresholds

Reports & APIs

PDF templates, webhook systemHTTP callbacks that notify external systems when events occur (job complete, anomaly detected)—fully reusable

Data Management

SAM integration, labeling pipelinesWorkflows for creating, reviewing, and exporting training data annotations—shared infrastructure